This example comes from my post about choosing between linear and nonlinear regression. This indicates a bad fit, and serves as a reminder as to why you should always check the residual plots. You can also see patterns in the Residuals versus Fits plot, rather than the randomness that you want to see. However, look closer to see how the regression line systematically over and under-predicts the data (bias) at different points along the curve. The fitted line plot shows that these data follow a nice tight function and the R-squared is 98.5%, which sounds great. The fitted line plot displays the relationship between semiconductor electron mobility and the natural log of the density for real experimental data. That might be a surprise, but look at the fitted line plot and residual plot below. No! A high R-squared does not necessarily indicate that the model has a good fit. Are High R-squared Values Inherently Good? While a high R-squared is required for precise predictions, it’s not sufficient by itself, as we shall see. How high should the R-squared be for prediction? Well, that depends on your requirements for the width of a prediction interval and how much variability is present in your data. See a graphical illustration of why a low R-squared doesn't affect the interpretation of significant variables.Ī low R-squared is most problematic when you want to produce predictions that are reasonably precise (have a small enough prediction interval). Obviously, this type of information can be extremely valuable. Regardless of the R-squared, the significant coefficients still represent the mean change in the response for one unit of change in the predictor while holding other predictors in the model constant. Humans are simply harder to predict than, say, physical processes.įurthermore, if your R-squared value is low but you have statistically significant predictors, you can still draw important conclusions about how changes in the predictor values are associated with changes in the response value.

For example, any field that attempts to predict human behavior, such as psychology, typically has R-squared values lower than 50%. In some fields, it is entirely expected that your R-squared values will be low.

No! There are two major reasons why it can be just fine to have low R-squared values. The R-squared in your output is a biased estimate of the population R-squared. You can have a low R-squared value for a good model, or a high R-squared value for a model that does not fit the data! R-squared does not indicate whether a regression model is adequate. R-squared cannot determine whether the coefficient estimates and predictions are biased, which is why you must assess the residual plots. Theoretically, if a model could explain 100% of the variance, the fitted values would always equal the observed values and, therefore, all the data points would fall on the fitted regression line. The more variance that is accounted for by the regression model the closer the data points will fall to the fitted regression line. The regression model on the left accounts for 38.0% of the variance while the one on the right accounts for 87.4%. Plotting fitted values by observed values graphically illustrates different R-squared values for regression models. However, there are important conditions for this guideline that I’ll talk about both in this post and my next post. In general, the higher the R-squared, the better the model fits your data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

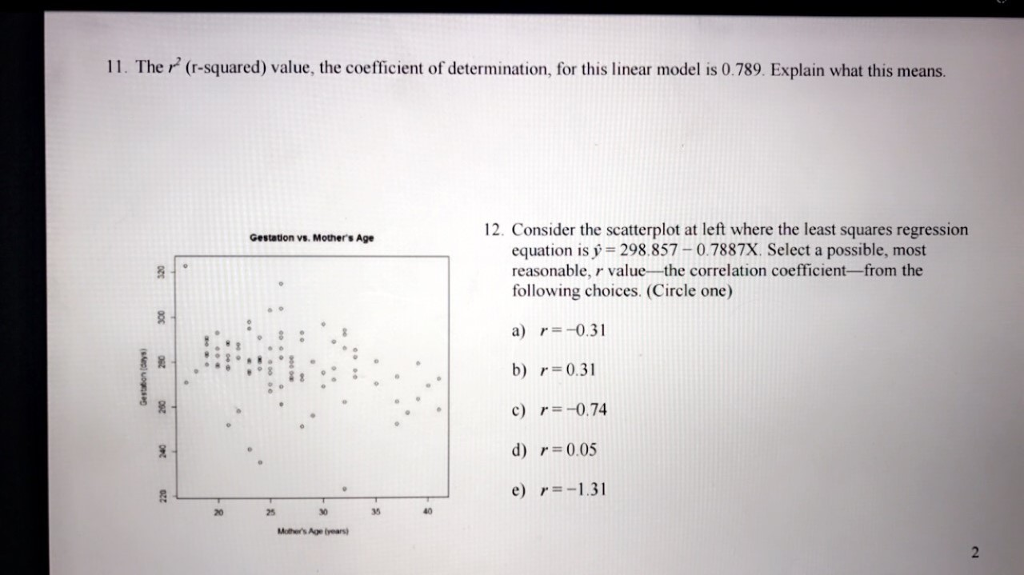

RSS Feed